Who's judging.

Engineers and leaders from Amazon, Google, Apple, Wayve, and more. People who've shipped real products — not just benchmarked them.

Manushi Sheth

Sonos

Data & AI leader with 6+ years leading product data spanning engineering, analytics, and ML.

LinkedIn

Sumanth Kadulla

Cloud Infrastructure

8+ years building cloud infra on AWS, Azure, GCP. Technical judge for 25+ international conferences.

LinkedIn

Deniz A. Akbasaran

Gorgias

Product & data specialist building evaluation frameworks for AI agents and optimizing LLM performance.

LinkedIn

Nishant Sinha

Amazon

Engineering Lead & Sr Engineer building distributed and ML systems, deploying models at edge.

LinkedIn

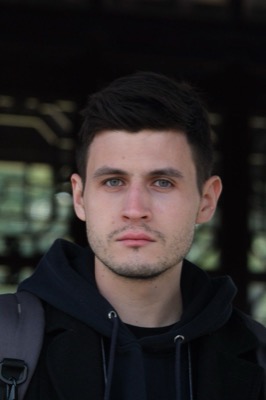

Mykhailo Shumilov

Vadimages

CTO & digital transformation leader. Expert in web development, system architecture, and emerging technologies.

LinkedIn

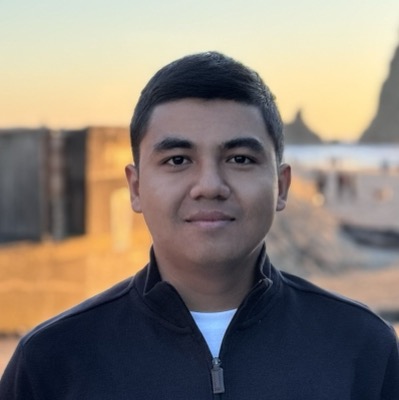

Vyom Mittal

Amazon

Principal Product Manager Technical with 15+ years leading identity, access governance, and zero trust for AI.

LinkedIn

Duygu Unlu

FameTech

SAP solution architect with 12+ years engineering global ERP landscapes and digital transformation.

LinkedIn

Anoop K. Pillai

Amazon

Sr Manager of Software Engineering leading 50+ engineers building EC2 pricing infrastructure. 11 US patents.

LinkedIn

Maksim Tumakov Security Judge

DefenScope

Cybersecurity product leader focused on AI-driven products. Researcher with experience at Kaspersky and BI.ZONE.

LinkedIn

Maneesh Singh

IT Leadership

IT Leader with 20+ years across Cloud, DevOps, AI/ML & NLP in banking, travel, and healthcare.

LinkedIn